Interactive audio-haptic perception for blind and low-vision users

PI: Kathleen McKeown

Co-PI: Tatiana Aloi Emmanouil, CUNY; Nikolaus Kriegeskorte, Columbia; Brian Smith, Columbia; Carl Vondrick, Columbia

Abstract

A picture is worth a thousand words, but only if the viewer can experience it. Our project aims to help blind and low-vision (BLV) people experience works of art, including their sensory and semantic dimensions, their emotional valence, the artist’s intentions, and other contextual information. Our project brings together researchers from vision, language, and interaction design, all informed by cognitive science, to develop AI Art Immersion for the visually impaired. Our work is inspired by the human visual system, which gives us an instant sense of our surroundings and lets us explore a complex scene interactively with eye movements and internal shifts of attention, enabling us to rapidly grasp the layout, the objects and characters present, and how the elements relate to each other.

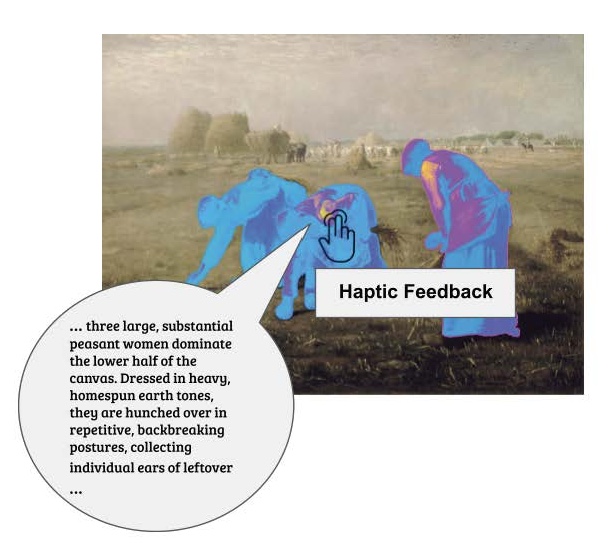

Figure 1 shows an output generated by our approach. Haptics component: For BLV people, haptics is an important modality for both perceiving and exploring the environment. Importantly, cognitive scientists have shown that the senses of touch and hearing can offer the same cognitive benefits and functional performance as vision, provided that a person is able to explore scenes just as fully with those senses.

Publications

J., Purvis, A., Krut, K., Stein, R., Pantalony, R. E., Bansal, M., & McKeown, K. (2025). PoSh: Using Scene Graphs To Guide LLMs-as-a-Judge For Detailed Image Descriptions. arXiv. https://doi.org/10.48550/ARXIV.2510.19060

Resources

In progress