Evaluating Sparsely Structured Selectivity in Neural Codes

PI: Stefano Fusi

Co-PI: Xaq Pitkow, CMU; Andreas Tolias, Stanford

Abstract

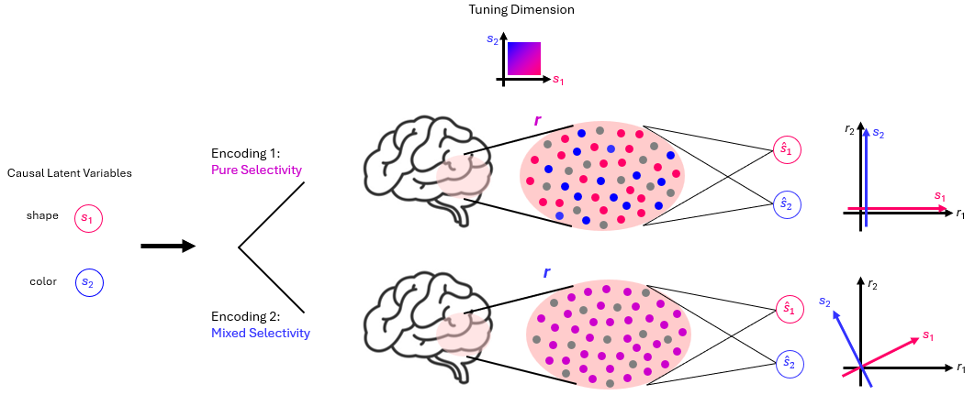

Sparse selectivity – the hypothesis that neurons' selectivity encodes only a small subset of causal latent variables – is poorly understood both in terms of how to detect it from data, and its computational advantage. We address these gaps by 1. characterizing the precise conditions under which existing methods fail and proposing an improved Gaussian mixture model-based method to detect potential sparse selectivity, and more substantively, 2. Developing a rigorous theoretical framework showing that sparse selectivity confers a sample-efficiency advantage in neural decoding, reducing the effective parameter burden on a downstream decoder, enabling reliable generalization from fewer training trials.

Figure 1: Representational Properties of 2 Encoding Strategy: Pure (Sparsest) vs. Mixed (Dense)

Publications

In progress

Resources

In progress