A Study of Stable and Plastic Structure in Representations From Artificial and Natural Continual Learning Systems

PI: Richard Zemel

Co-PI: Stefano Fusi, Columbia

Abstract

Artificial intelligence (AI) systems tasked with sequentially learning to complete new tasks, a regime known as continual learning, have struggled to maintain performance on previously learned tasks. This phenomenon is known as catastrophic forgetting. In the case of large language models, pretraining on enormous corpora of unstructured data before fine-tuning the model on a specific task has been proven to yield models that efficiently learn the new task but struggle to retain this efficiency when learning new tasks beyond the first [1–3]. This is in stark contrast to natural learning systems like the human brain, which seem to not only retain much of their performance on previously learned tasks but even learn new tasks with improved efficiency by bootstrapping previously learned information. How can a system have both the plasticity to learn and excel at new tasks as well as the stability to maintain previously learned capabilities? How can information be structured within the system so that old knowledge can be used to accelerate learning? This project aims not only to quantitatively describe how neural representations change over time and throughout learning separate tasks, but to also provide theoretical models for decoding from these representations without forgetting.

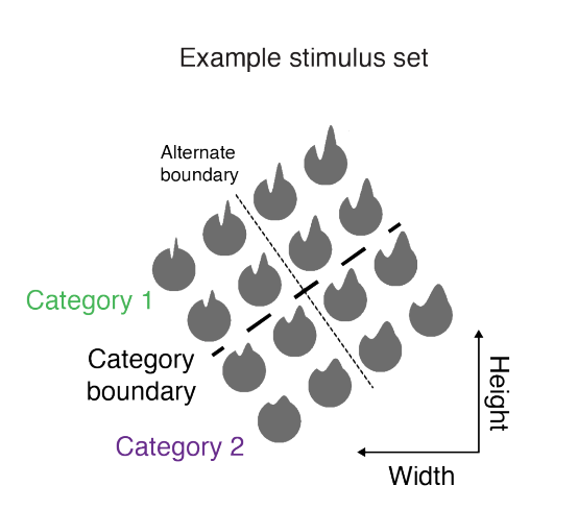

Figure 1: An example of a category boundary that monkeys learn

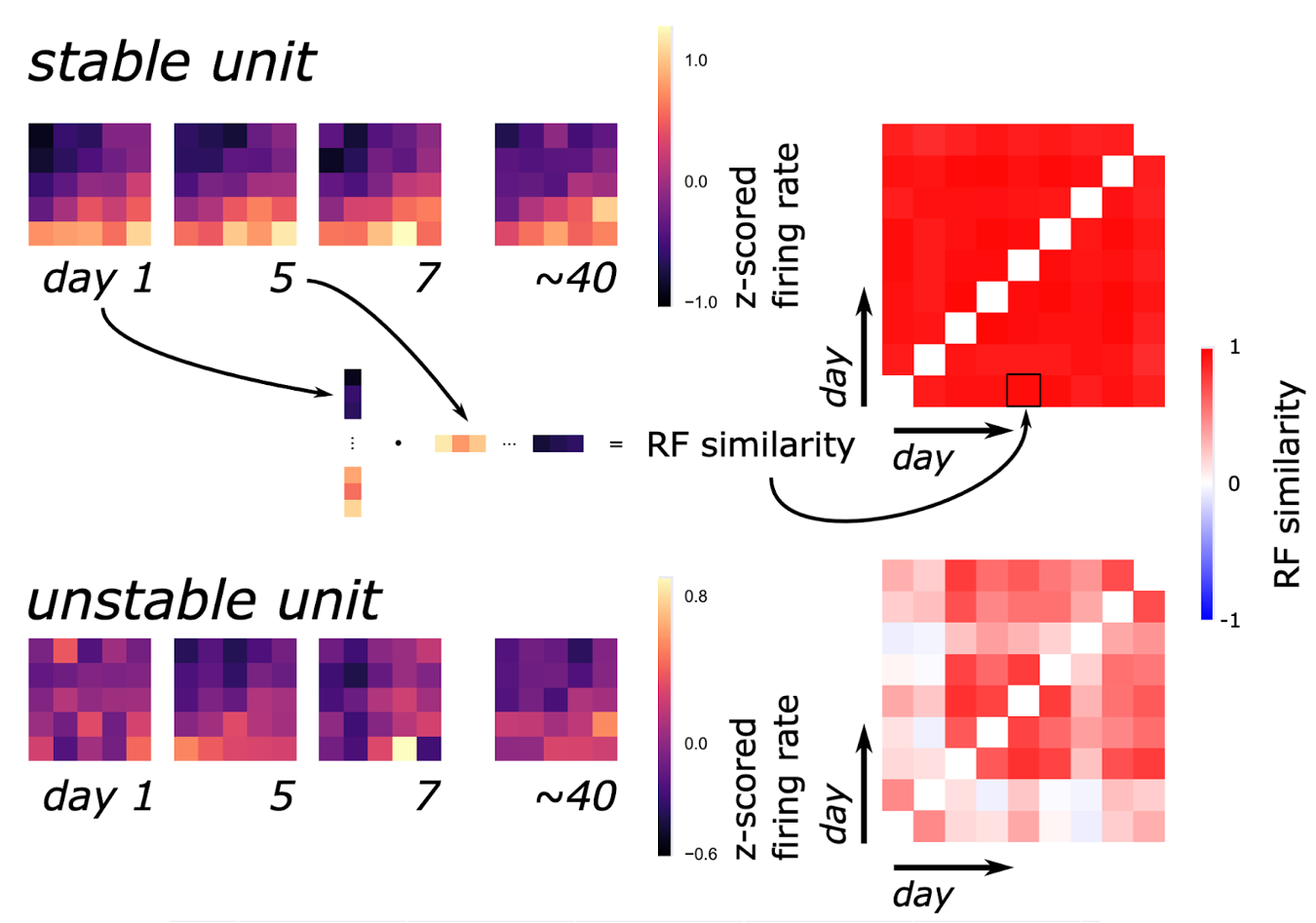

Figure 2: Examples of stable/unstable units and their corresponding cross-day similarity maps

Publications

In progress

Resources

In progress