Analysis and Metrics for Continual Learning and Continual Meta Learning

PI: Richard Zemel

Co-PI: Kathy McKeown, Columbia; Andreas Tolias, Stanford; Kim Stachenfeld, Columbia; Toni Pitassi, Columbia; David Schwab, CUNY; Mohammadreza Davoodi, Memphis

Abstract

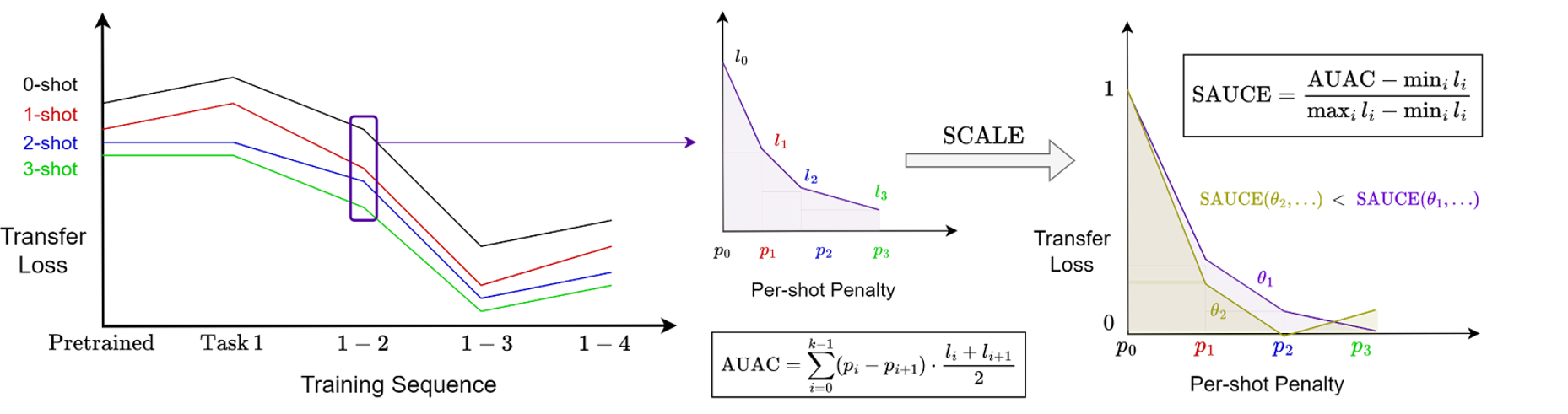

Continual learning methods aim to maximize the stability and plasticity of machine learning models that are trained on a sequence of tasks. The standard measure of stability (i.e., forgetting) is the 0-shot performance of a model on previously learned tasks, and plasticity, the performance on the most recently learned task. However, 0-shot evaluation does not fully measure a model or method's ability to retain learned information or adapt quickly to new information, as it requires perfect recall across multiple tasks. In this paper, we propose few-shot evaluation as a more comprehensive assessment of the stability and plasticity of a continual learning system. Through few-shot evaluation with a novel metric—per-shot plasticity—on task sequences in the vision and language domains, we aim to show that a mixture of ‘foresight’ via the meta-learning of a short sequence of future tasks and hindsight via replay on previously learned tasks leads to a model that strikes an ideal balance between stability and plasticity in a continual learning setting.

Figure 1: k-shot evaluation shows reduced forgetting and increased forward transfer for baselines

Figure 2: SAUCE measures the rate of improvement of the model as task examples are added

Publications

- Zhang, Y., McKeown, K., & Muresan, S. (2026). LiveNewsBench: Evaluating LLM web search capabilities with freshly curated news. arXiv. https://doi.org/10.48550/arXiv.2602.13543

Resources

Benchmarks and Datasets

- LiveNewsBench: A continuously updated benchmark for evaluating agentic web search capabilities in large language models, featuring automatically generated and human-verified question-answer pairs from real-time news

- Day in the Life Benchmark Suite (in development): Multilingual and real-world continual learning benchmarks, including affective state identification across eight languages, designed to capture dynamic, recurring task structures

Tools and Metrics

- Scaled Area Under the Adaptation Curve (SAUCE): A novel metric for quantifying adaptation efficiency in continual learning systems

- Few-shot evaluation framework for assessing stability and plasticity in sequential learning settings

Code and Resources

- LiveNewsBench GitHub repository: https://github.com/LiveNewsBench/LiveNewsBench/tree/main

- SAUCE metric and associated evaluation tools (in development)