Constructing cognitive architectures from interacting LLMs for human-like cognition

PI: Tom Griffiths

Co-PI: Xaq Pitkow, CMU; Sean Escola, Protocol Labs

Abstract

While contemporary large language models (LLMs) are increasingly capable in isolation, there are still many difficult problems that lie beyond the abilities of a single LLM. For such tasks, there is still uncertainty about how best to take many LLMs as parts and combine them into a greater whole. This project explores how potential blueprints for designing such modular language agents can be found in the existing literature on cognitive models and artificial intelligence (AI) algorithms.

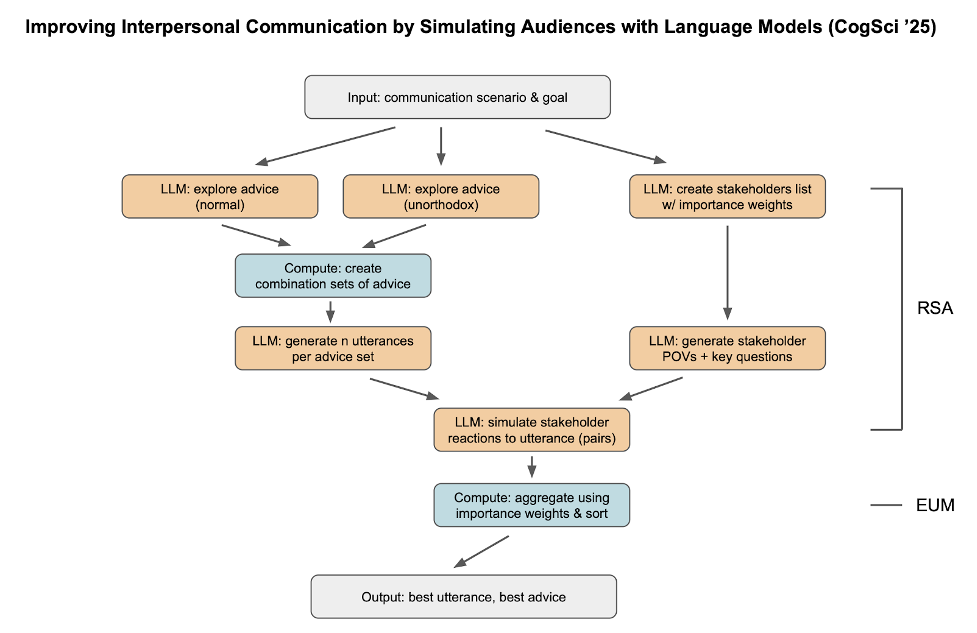

Figure 1: An example of a language agent for communication constructed using principles from the Rational Speech Act theory developed in cognitive science

Publications

In progress

Resources

In progress