Modular Computations in AI and Neuroscience: Principles and Applications

PI: Ken Miller

Co-PI: Larry Abbott, Columbia; Tahereh Toosi, Columbia

Abstract

Modularity is a fundamental organizing principle in both biological and artificial intelligence systems. In the brain, functionally specialized neural circuits divide into dorsal (spatial/action-oriented processing) and ventral (object recognition) pathways. In AI, Mixture-of-Experts architectures selectively activate specialized subnetworks. Despite these parallels, we lack a fundamental understanding of why such modularity emerges and how to design effective modular systems. This project systematically investigates the emergence and functional significance of modular organization across biological and artificial vision systems, using a comparative approach spanning multiple species (mouse, monkey, human) and model architectures. We test three hypotheses: the Task-Tension Hypothesis (computational tensions naturally drive modularity in self-supervised models), the Perspective-Induced Hypothesis (egocentric vs. allocentric processing drives specialization), and the Agent-Action Hypothesis (action requirements drive dorsal/ventral-like division in RL agents).

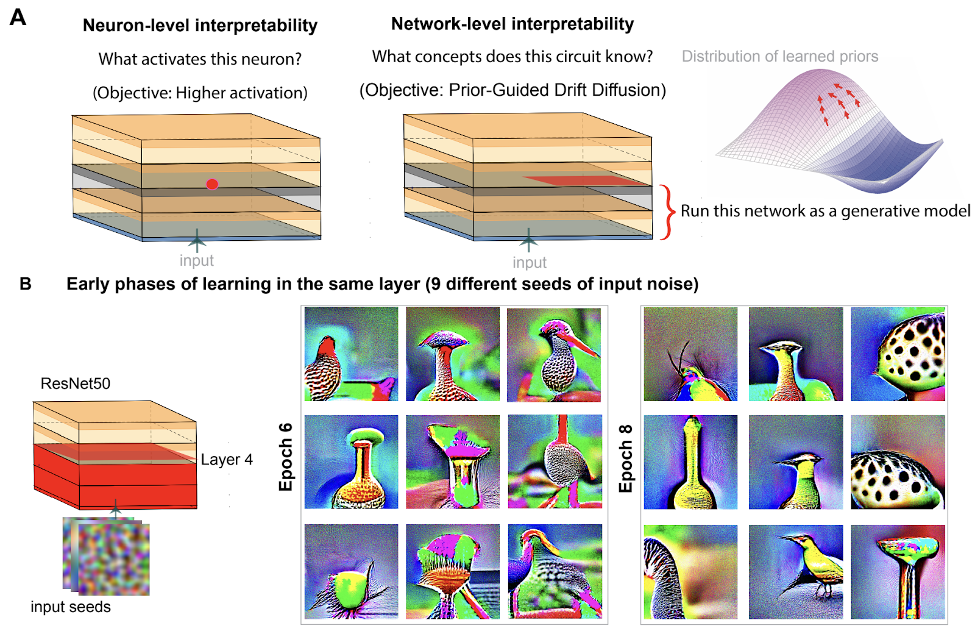

Figure 1: Network-level interpretability through implicit generative operators. PGDD shifts from neuron-level analysis to network-level understanding by running networks as generative models to probe learned priors.

Publications

- Toosi, T. (2025, September 30). Interpretability at the Network Level: Prior-Guided Drift Diffusion for Neural Circuit Analysis. Mechanistic Interpretability Workshop at NeurIPS 2025. https://openreview.net/forum?id=vL28JhJDEM

- Toosi, T., & Miller, K. D. (2025c, September 23). Unifying Gestalt Principles Through Inference-Time Prior Integration. First Workshop on CogInterp: Interpreting Cognition in Deep Learning Models. https://openreview.net/forum?id=WksqQxYZXs

- Toosi, T., & Miller, K. D. (2025). Natural Scene Coding Consistency in Genetically-Defined Cell Populations. COSYNE 2026. Poster. https://doi.org/10.1101/2025.10.29.685363

- Toosi, T., & Miller, K. (2026). Feedback-Mediated Prior Integration Unifies Gestalt Perceptual Organization. Vision Sciences Society. Oral Presentation.

- Toosi, T., & Miller, K. D. (2025a). Generative inference unifies feedback processing for learning and perception in natural and artificial vision. https://doi.org/10.1101/2025.10.21.683535

Resources

- HuggingFace demo – Predicting human hallucination based on visual input: https://huggingface.co/spaces/ttoosi/Human_Hallucination_Prediction

- HuggingFace demo – Predicting what humans see based on perceptual organization laws: https://huggingface.co/spaces/ttoosi/GenerativeInferenceDemo