Predictive coding with memory-based targets

PI: Blake Richards

Co-PI: Richard Zemel

Abstract

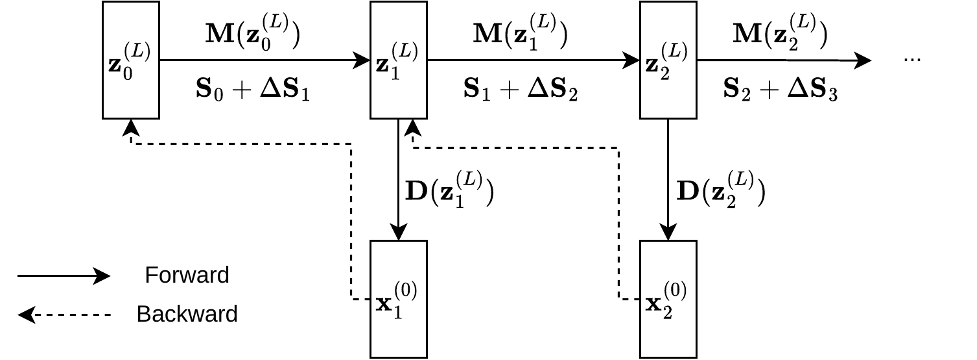

This project extends predictive coding—a biologically plausible theory of cortical computation—from static data to sequential data by incorporating an associative memory module. The model is trained in an autoregressive and self-supervised manner, allowing it to learn rich representations of sequential data and recall relevant information from past inputs. Importantly, the approach does not rely on backpropagation through time, making it more biologically plausible and memory efficient as sequences grow longer. The architecture uses a DeltaNet-style associative memory that stores and retrieves key-value pairs, with a learned modulation of plasticity analogous to neuromodulation.

Figure 1: One of our proposed architectures, where M represents the memory module that stores long-context information.

Publications

In progress

Resources

In progress