Principles of flexible and robust decision making using multiple sensory modalities

PI: Pratik Chaudhari

Co-PI: Joshua Gold and Vijay Balasubramanian

Abstract

This project seeks to investigate a characteristic structure in the learnable tasks called “sloppiness”. Neural networks trained on sloppy tasks have a Fisher Information Metric (FIM) with eigenvalues distributed uniformly on a logarithmic scale. This indicates a large degree of redundancy in the learned parameters; i.e., there is one set of parameters that is tightly constrained by the data, another that can vary twice as much without affecting predictions, and so on. The goals of this project are: (a) expand and formalize our understanding of what structure in perception and decision-making tasks affects generalization in neural networks, and (b) apply these ideas to understand how human and non-human primates learn tasks and adapt to changes in tasks that require inferences using multi-modal sensory inputs and in complex, dynamic environments.

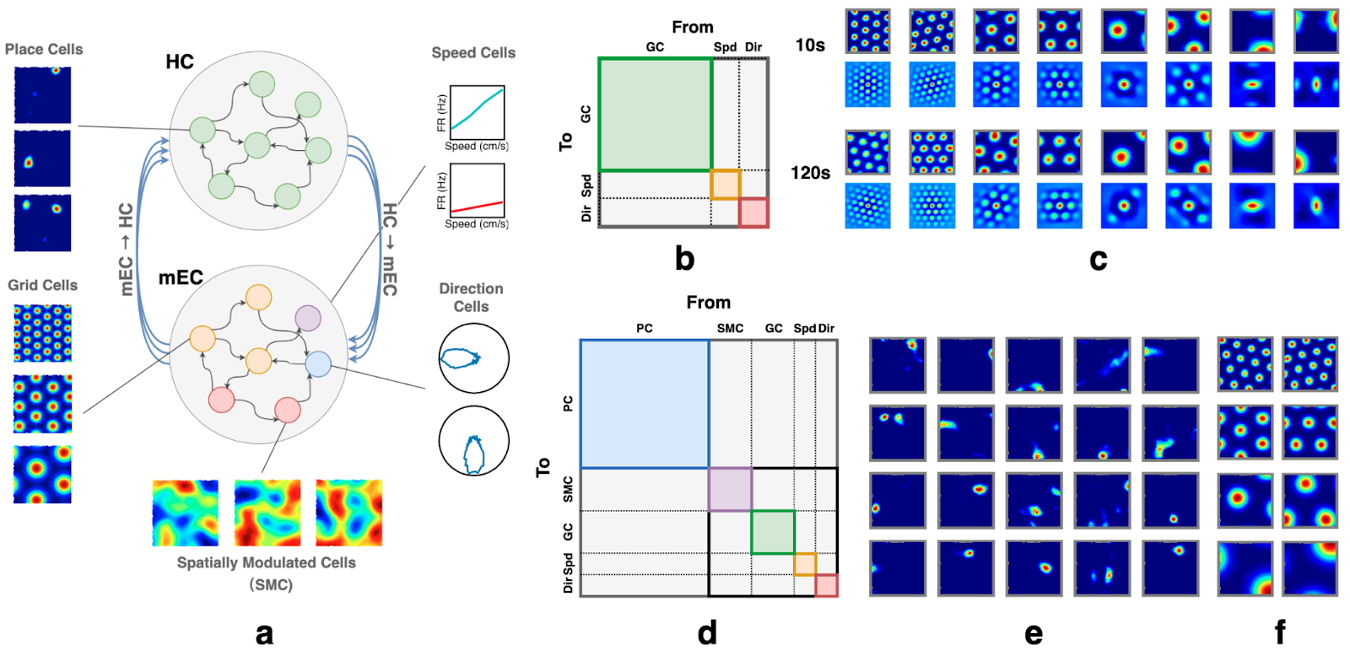

Figure 1: (a) RNN model of the HC-MEC loop. The top subnetwork contains HPCs, with example emergent place fields shown on the left. The bottom subnetwork includes partially supervised GCs, as well as supervised speed, head direction, and spatially modulated cells (SMCs); example grid fields shown at left. (b) & (d) Speed cells and head direction cells are denoted as Spd and Dir, respectively. Colored regions highlight within-group recurrent connectivity to indicate the partitioning of the connectivity matrix by cell groups. However, at initialization, no structural constraints are enforced. The full connectivity matrix is randomly initialized. (b) Illustration of the path-integration network’s connectivity matrix. (c) The network is trained to path-integrate 5s trials and tested on 10s trials (L = 100.50 ± 8.49 cm); the grid fields remain stable even in trials up to 120s (L = 1207.89 ± 30.98 cm). For each subpanel (10s, 120s): top row shows firing fields; bottom row shows corresponding autocorrelograms. (d) Illustration of RNN connectivity matrix of full HC-MEC loop. (e-f) Example place fields (emergent) and grid fields in the full HC-MEC RNN model.

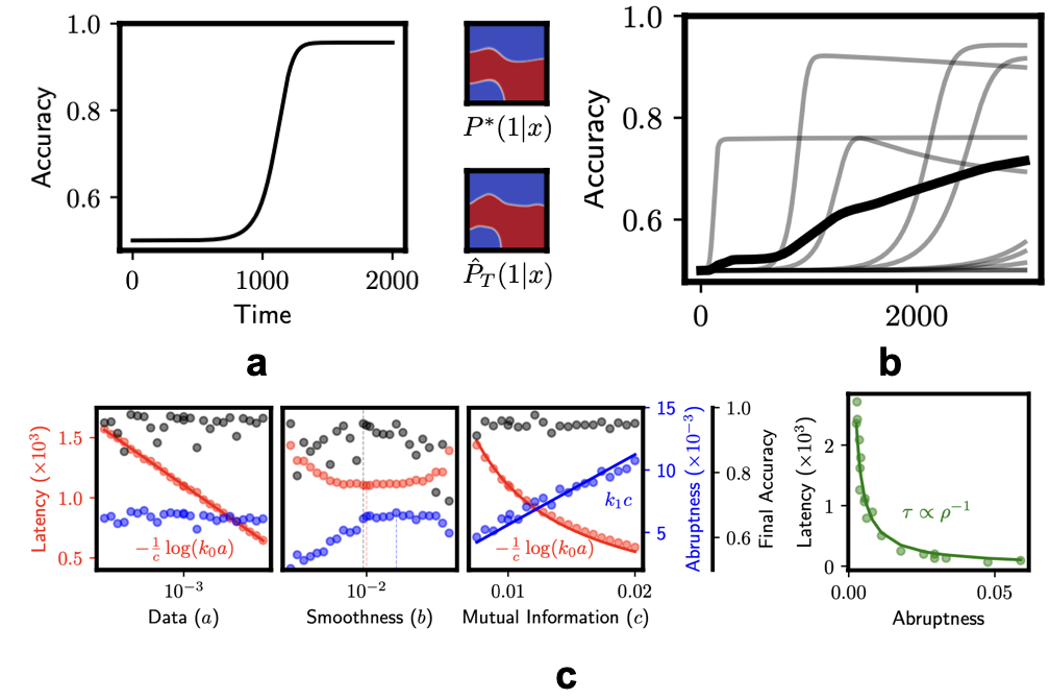

Figure 2: (a) Accuracy of the learner as it is presented evidence incrementally over time simulated using our analytical derivations. Heatmaps compare the true probability distribution P*with the learned distribution below. Red is high probability and blue is low probability. (b) Gallistel et al. (PNAS, 2004) observed that the learning curve (accuracy as a function of samples) when averaged over a group is gradual (a power-law) because each individual has a different latency and abruptness. Our analysis and data nearly perfectly reproduce their experimental observations. (c) Latency (red), abruptness (blue) and final accuracy after transition (black) for different types of learners given by parameters (a, b, c). Left: The parameter a characterizes the influence of the current data point in updating the belief. The parameter b characterizes the strength of a smoothness-based prior employed by the optimistic student. And the parameter c is the strength of prior that regards the task to be learnable with high mutual information between its inputs and outputs. Right: Our analytical calculations matche the inverse scaling law that was found experimentally by Gallistel et al.

Publications

- Bosulu, J., ZP Kilpatrick, ZP., Josić, K., Gold, J. (2026) An Information Compass for Probabilistic Reasoning in the Brain. Preprint on arXiv soon.

- De Silva, Ashwin, Rahul Ramesh, Rubing Yang, Siyu Yu, Joshua T. Vogelstein, and Pratik Chaudhari. "Prospective Learning: Learning for a Dynamic Future." NeurIPS 2024.https://arxiv.org/abs/2411.00109

- Nadgir, A., Balasubramanian, V., Chaudhari, P. (2026). The Optimistic Student: A Belief in Learnability Leads To Sudden Insight and Superstition. Preprint on arXiv soon.

- Ramesh, R., Bisulco, A., DiTullio, R.W., Wei, L., Balasubramanian, V., Daniilidis, K. and Chaudhari, P., 2024. "Many Perception Tasks are Highly Redundant Functions of their Input Data". arXiv preprint https://arxiv.org/abs/2407.13841

- Rooke, S., Wang, Z., Di Tullio, R.W. and Balasubramanian, V., "Trading Place for Space: Increasing Location Resolution Reduces Contextual Capacity in Hippocampal Codes.", NeurIPS 2024. https://www.biorxiv.org/content/10.1101/2024.10.29.620785v1

- Wang, Z., Di Tullio, R.W., Rooke, S. and Balasubramanian, V., "Time makes space: Emergence of place fields in networks encoding temporally continuous sensory experiences." NeurIPS 2024. https://arxiv.org/abs/2408.05798

Resources

Prospective Learning: Principled Extrapolation to the Future https://github.com/neurodata/prolearn and https://github.com/neurodata/prolearn/blob/main/tutorials/tutorial.ipynb

Time makes space: Emergence of place fields in networks encoding temporally continuous sensory experiences https://github.com/zhaozewang/place_cells_episodic_rnn